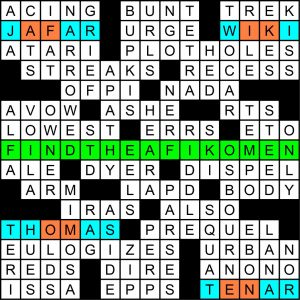

Matt here, doing a quick post of Aimee Lucido’s and my special Passover meta at the AVCX. Solvers were given the beautiful drawing below, created by GAMES Magazine (and New Yorker) legend Robert Leighton, and given these instructions:

The two children at this Seder (see attached drawing) are trying to 40-Across. But you can play 40-Across in this crossword grid, too! In fact, doing so will lead you to the contest answer.

So the hunt is on, and the big hint was the grid-spanning clue at 40-A: [Passover hunt where kids search for a hidden piece of matzoh, which is broken into four pieces in this grid — the contest answer is what the four pieces are hidden in]. That yields FIND THE AFIKOMEN, which is the small piece of broken-off matzoh traditionally hidden for kids to find at a Passover seder. So where is that afikomen hiding?

Well it’s been broken up into four parts: AF/IK/OM/EN, and each of those bigrams can be found in exactly one grid entry:

14-A [“Aladdin 2: The Return of ___”] = JAFAR

16-A [Prefix with -pedia or -Leaks] = WIKI

56-A [Tank engine who’s friends with James, Sam, and Emily] = THOMAS

71-A [Main character in Ursula K. Le Guin’s “The Tombs of Atuan”] = TENAR

Let’s look at the letters those pieces of AFIKOMEN are hiding in:

JAFAR

WIKI

THOMAS

TENAR

Those spell out JAR WITH A STAR, which is the hiding place of the afikomen and also our meta answer (circled in the drawing below).

I enjoyed working on this meta quite a lot. Thanks to co-author Aimee Lucido for, in addition to her work on the grid and clues, coming up with the core idea of the meta; to AVCX editor Ben Tausig for shepherding this through and publishing it; and to illustrator and puzzlemaster Robert Leighton, whose work in GAMES et al. I’ve admired since the mid-1980s.

Enjoyed this one a lot Matt and Aimee! Chag sameach!

What was the deal with the OSBOURNE clue? Ozzy is the only reason the others are famous, so why the half-hearted grudging admission that he’s part of their family?

I think it’s a winking acknowledgment that his name takes the clue from tough to trivial.

Exactly

I found the afikomen relatively easily. Since this is not part of the MGWCC, I had no idea how hard the meta would be.

So I simply submitted ‘In the Corners’, which tuned out to be a sucky answer once I saw the real deal.

Well done Matt and Aimee! I should have tried a little harder!

Metas on the AVCX ar on the gentler side with one maybe two steps to find the meta answer.

When I finally realized “afikomen” has 8 letters, *broken into 4 pieces* means 2 letter words…

JAFAR was just SHOUTING at me the whole time but I couldn’t hear what it was saying until I was finally able to see the AF hiding the JAR.

My favorite detail is in the cartoon: the chair so the kids can climb up and reach the jar with a star. Really wonderful.

I never even noticed. Leighton!!

Really enjoyed this. Thanks!!

I managed to get stuck in a rabbit hole: there are four synonymous entries for slangy “nothing” – BUPKIS (the only one clued as such), ZIP, NADA, SQUAT.

Obviously this yielded me bupkis.

I know these rabbit holes are 99.99% coincidental, but I was really wondering this time if it was intentional.

Question: Practically speaking, are the four pieces typically hidden together, or in disparate locations?

Depends on the number of children looking. In my house, for example, we broke one big piece in half, and hid the two pieces in different places — with the difficulty of finding each calibrated to their respective ages.

I kind of liked how in the image the children were cool with looking for the same piece(s) in one place, together. I’d like to go with that kind of competition-discouraging approach next year. Life imitating art!

Now I’m wondering if the tradition of hiding Easter eggs for kids to find is a complete appropriation of Passover’s “find the afikomen.”

Loved this one! Thanks!

Love this!